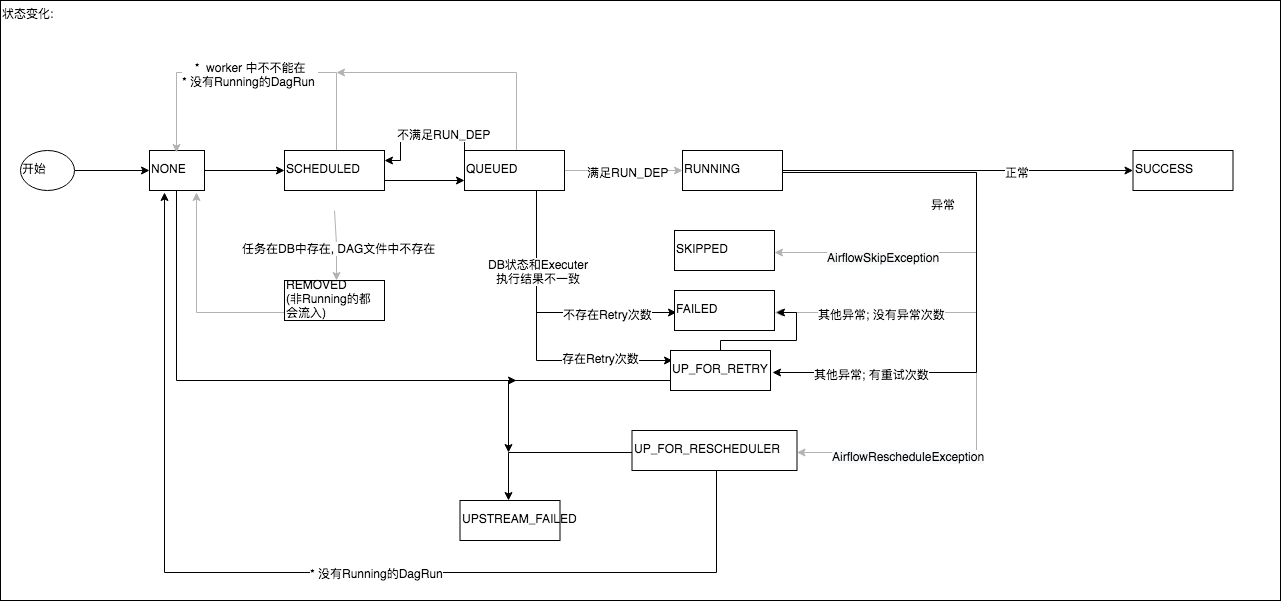

I also tried changing the logginglevel in /airflow/airflow.cfg file from INFO to DEBUG, but that did not help, either. I have done some research and they all point to /airflow/logs/scheduler folder however, this folder is empty. Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/subprocess. What I cannot figure out is where I can find the log file in which the outputs of print statements are stored. Oct 09 17:32:38 aa airflow: restore_signals, start_new_session) Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/subprocess.py", line 800, in _init_ Oct 09 17:32:38 aa airflow: with Popen(*popenargs, **kwargs) as p: Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/subprocess.py", line 339, in call Oct 09 17:32:38 aa airflow: retcode = call(*popenargs, **kwargs) Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/subprocess.py", line 358, in check_call While each component does not require all, some configurations need to be same otherwise they would not work as expected. Use the same configuration across all the Airflow components. Oct 09 17:32:38 aa airflow: subprocess.check_call(command, close_fds=True) This page contains the list of all the available Airflow configurations that you can set in airflow.cfg file or using environment variables. The type of agent the event was collected by. Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/site-packages/airflow/executors/sequential_executor.py", line 57, in sync Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/site-packages/airflow/executors/base_executor.py", line 134, in heartbeat Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 1532, in _validate_and_run_task_instances Oct 09 17:32:38 aa airflow: if not self._validate_and_run_task_instances(simple_dag_bag=simple_dag_bag): Composer produces the following logs: airflow: The uncategorized logs that Airflow pods. Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 1470, in _execute_helper logname airflow-worker The name of the log to write to. Oct 09 17:32:38 aa airflow: self._execute_helper() Oct 09 17:32:38 aa airflow: File "/home/ubuntu/anaconda3/envs/data_analysis_lab/lib/python3.7/site-packages/airflow/jobs/scheduler_job.py", line 1399, in _execute Thankfully, starting from Airflow 1.9, logging can be configured easily, allowing you to put all of a dag’s logs into one file. airflow-triggerer: The logs the Airflow triggerer. dag-processor-manager: The logs of the DAG processor manager (the part of the scheduler that processes DAG files).

airflow-scheduler: The logs the Airflow scheduler generates. Oct 09 17:32:38 aa airflow: Traceback (most recent call last): airflow-upgrade-db: The logs Airflow database initialization job generates (previously airflow-database-init-job). Amazon MWAA runs pip3 install -r requirements.txt to install the Python dependencies on the Apache Airflow scheduler and each of the workers.Oct 09 17:32:38 aa airflow: ERROR - Exception when executing execute_helper On Amazon MWAA, you install all Python dependencies by uploading a requirements.txt file to your Amazon S3 bucket, then specifying the version of the file on the Amazon MWAA console each time you update the file. For more information, see Apache Airflow access modes.Īmazon S3 configuration - The Amazon S3 bucket used to store your DAGs, custom plugins in plugins.zip,Īnd Python dependencies in requirements.txt must be configured with Public Access Blocked and Versioning Enabled. logs/worker. In the Monitoring pane, choose the log group for which you want to view logs, for example, Airflow scheduler log group. logs/scheduler.logs & And then I simply launch another worker on my local machine with: nohup airflow celery worker -without-gossip >. To check the Apache Airflow log stream (console) Open the Environments page on the Amazon MWAA console. In addition, your Amazon MWAA environment must be permitted by your execution role to access the AWS resources used by your environment.Īccess - If you require access to public repositories to install dependencies directly on the web server, your environment must be configured with /logs/worker.logs & nohup airflow scheduler >. Permissions - Your AWS account must have been granted access by your administrator to the AmazonMWAAFullConsoleAccessĪccess control policy for your environment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed